Sync Data Warehouse to Salesforce Marketing Cloud 2026: SFTP vs API — The Honest Decision Guide

Team Genetrix

•

April 1, 2026

•

6 min read

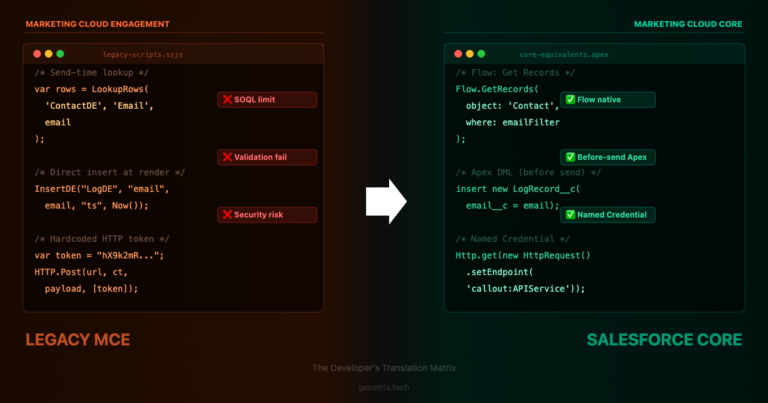

Every team that uses Salesforce Marketing Cloud alongside a data warehouse eventually hits the same question: what is the best way to sync data from the warehouse into SFMC on a regular schedule? The answer is almost always SFTP for weekly batch syncs — but the reasoning behind that matters, and understanding where the API fits is what stops teams from defaulting to the wrong tool for the wrong use case.

We see both mistakes regularly. Teams reaching for the API because it feels more modern, then running into rate limits and character encoding issues at volume. And teams using SFTP for real-time event data that should never be batch-processed in the first place. Getting the choice right upfront prevents a class of integration headaches that are genuinely painful to unpick once they are in production.

Weekly, daily, or hourly batch syncs. Data does not need to reach SFMC within minutes of the event occurring.

No practical limit for SFMC Enhanced FTP. SFMC Import Activity handles millions of rows per file reliably without rate limits.

Warehouse exports a CSV to SFMC’s Enhanced FTP on a schedule. Automation Studio Import Activity picks up the file and loads it into the target Data Extension.

Add and Update (upsert) or Overwrite. Data action is configurable per import. Upsert is recommended to avoid wiping records on partial file failures.

If file does not arrive before the Import Activity runs, the automation fails with a clear error. Recoverable by re-dropping the file and re-running the automation.

SSH public key authentication. No OAuth token refresh required. No risk of credential expiry silently breaking the integration overnight.

Individual events need to reach SFMC within minutes — form submissions, purchases, real-time profile updates, API-triggered journeys.

Sync API — best under 100K records per batch. Async API handles larger volumes but requires the feature to be enabled on your account and adds complexity.

Authenticate via OAuth 2.0 client credentials. POST to the DE Rows endpoint (sync or async). Rows are upserted directly into the target Data Extension.

Upsert only — updates existing records and inserts new ones. No Overwrite option via API. Requires a primary key on the DE.

Rate limit errors (HTTP 429), token expiry, partial batch failures that require row-level error parsing. Harder to monitor and recover from at volume than file-based imports.

OAuth 2.0 access tokens expire after 20 minutes. Your integration must handle token refresh automatically or calls will fail silently.

No API rate limits. Handles millions of rows. Clear failure modes. Simple SSH auth. Works natively with Automation Studio scheduling. No custom code required to operate.

Use for individual subscriber actions, form submissions, or triggered journey entries — not for pushing large historical datasets from a warehouse on a schedule.

The SFTP Import Pattern for Weekly Warehouse Syncs

For most data warehouse to SFMC syncs — weekly, daily, or even hourly batch refreshes — the SFTP path is the right choice. There are no API limits to manage, no OAuth token refresh to maintain, and the Import Activity in Automation Studio gives you a scheduled, auditable pipeline with clear error notifications without writing a single line of code.

The pattern has three components: a scheduled export job from your warehouse that produces a clean CSV, a file transfer process that drops the file onto SFMC’s Enhanced FTP at the right time and with the right file name, and an Automation Studio automation that triggers when the file arrives and imports it into the target Data Extension.

🏛️

📡

⚙️

🗃️

File Requirements and Common Failure Points

Most SFTP import failures come down to file format issues rather than connection problems. Getting these right before the first run prevents the majority of support headaches:

| Requirement | Correct Setting | Note |

|---|---|---|

| Encoding | UTF-8 | Required Non-UTF-8 encoding causes silent data corruption on special characters — accented names, non-Latin characters. |

| Delimiter | Comma (CSV) | Default. Tab-delimited is also supported. Avoid pipe-delimited unless your data contains commas in values and you cannot quote-wrap fields. |

| Headers | Match DE field names exactly | Required Column names in the CSV header row must exactly match the Data Extension field names including case. |

| Primary key column | Present and populated for every row | Required If the DE has a primary key, every row must have a value in that column or the row will be skipped. |

| File naming pattern | Include date in filename | Best Practice e.g. subscribers_20260401.csv. Makes debugging easier and prevents file conflicts. |

| File location on FTP | /Import/ subfolder | Configure the Import Activity File Location to point to the exact subfolder. Files in the wrong folder will not trigger the automation. |

| Max file size | No hard limit for Enhanced FTP | For very large files (> 1 million rows), test end-to-end before running in production to confirm import times are within your schedule window. |

Implementation Checklist

- Confirm your target Data Extension has a primary key defined before configuring the Import Activity — Add and Update mode requires one to work correctly.

- Set the CSV export to UTF-8 encoding. Test with a record that contains an accented character before going live.

- Verify CSV column headers match Data Extension field names exactly, including capitalisation.

- Use a File Drop trigger in Automation Studio rather than a fixed schedule. This decouples the automation from the export timing and is more resilient to export delays.

- Set the Import Activity to Add and Update (upsert). Never use Overwrite for a weekly data warehouse sync — if the file arrives incomplete or empty, Overwrite will wipe your DE.

- Include a date stamp in the export filename so you can identify which file was processed and when.

- Configure import error notification emails in the Import Activity so failures surface immediately rather than being discovered days later.

- After the first successful run, verify record counts in the target DE match the exported file row count minus the header row.

Frequently Asked Questions

For a weekly batch sync of warehouse data, yes — SFTP is the more scalable approach in practical terms. The REST API route introduces OAuth token management, SFMC API rate limits, and row-level error handling at volume. SFTP has none of those constraints and handles millions of rows natively. The API is genuinely better for real-time event data, but “feels more modern” is not a good reason to take on the operational complexity of an API integration for something a file drop handles cleanly.

A File Drop trigger fires the moment a matching file lands on the FTP regardless of time, which means your automation is always responding to actual data rather than running on a fixed clock that might fire before the export is ready. A scheduled trigger fires at a set time whether a file is present or not — if the file is late, the automation fails. File Drop is almost always preferable for data warehouse imports because export completion times can vary.

Run both in parallel. The SFTP import writes to one DE (or set of DEs) for the batch historical data. The API writes to a separate event DE for real-time triggers. The two paths do not interfere with each other. What you want to avoid is trying to make one mechanism do both jobs — the constraints of real-time API calls and batch file imports are incompatible enough that mixing them into a single pipeline creates unnecessary complexity.

Files on SFMC’s Enhanced FTP are automatically deleted after 21 days. This is a platform default you cannot change. If you need to retain a historical archive of exported files, configure your warehouse export job to also write to your own cloud storage (S3, Azure Blob, GCS) before or alongside the SFTP transfer. Do not use SFMC’s FTP as a file archive.

Designing a Data Warehouse to SFMC Integration?

Getting the architecture right at the start avoids costly rework once data volumes grow. Genetrix helps teams design and implement reliable SFMC data pipelines — from initial sync architecture through to monitoring and error handling in production.

© 2026 Genetrix Technology · Salesforce Consulting Partner · Pune, India & Las Vegas, US

Published: April 1, 2026 · Category: Marketing Cloud · Data Integration